Cube AI Agent: Designing AI That Fits Into How People Actually Work

The Problem

When Cube started building AI features, the question wasn't just "what should AI do?" — it was "where does it live, and how does it show up consistently across everything?" As the product expanded across the web portal, Slack, and a spreadsheet plugin, there was a real risk of AI feeling scattered — different interactions, different patterns, different levels of trust depending on where you were. My job was to figure out the experience foundation before that happened.

The Real Challenge: Alignment Before Design

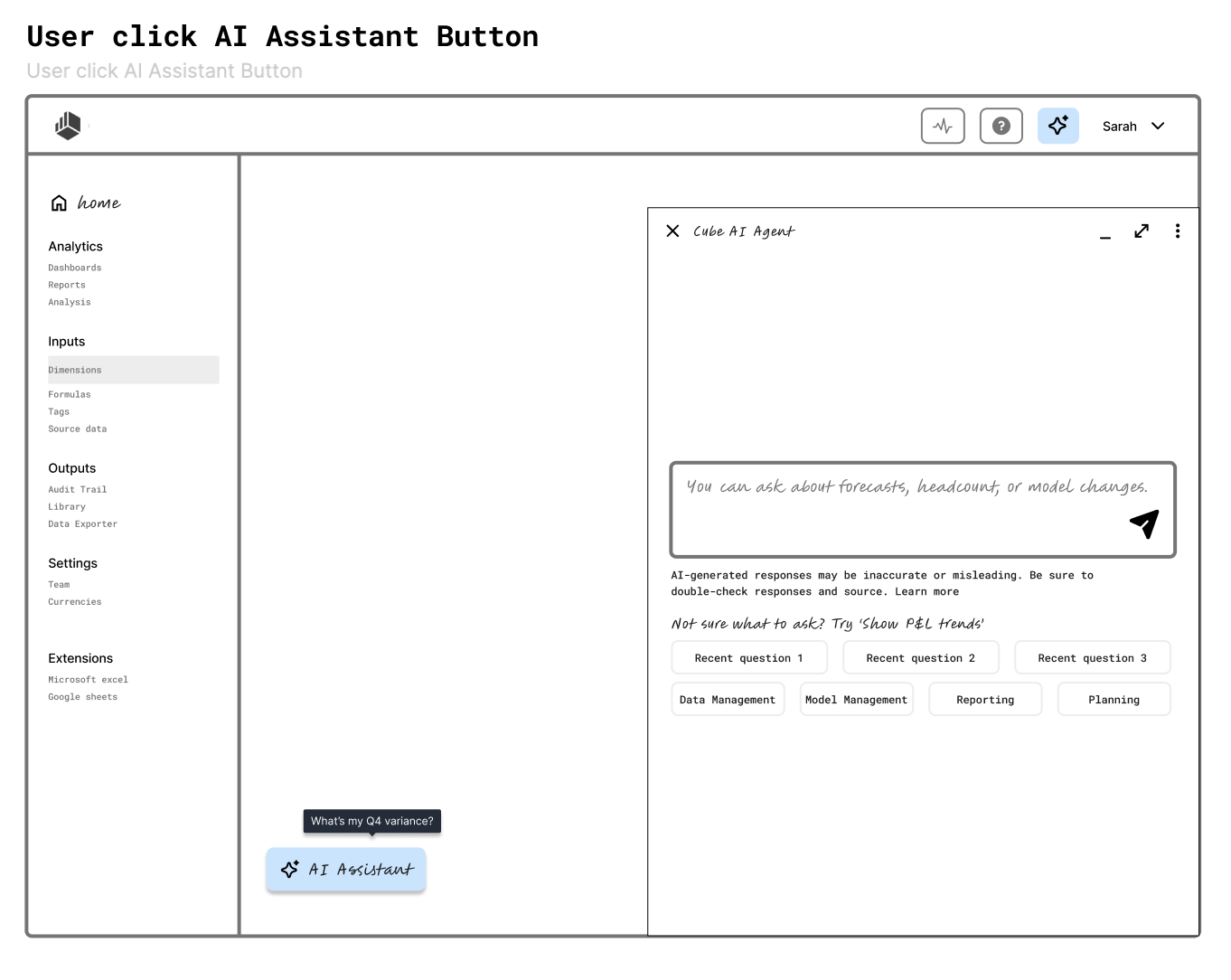

The hardest part of this project wasn't the UI. It was getting cross-functional alignment on what AI should and shouldn't do in the product. Before I could design anything meaningful, I needed to answer: what actions can AI take, what does it surface, and how does it hand off to the user? I used an early lo-fi wireframe of the portal experience as an alignment artifact — not to show the final design, but to give teams something concrete to react to. That wireframe became the foundation that subsequent epics built from.

Research: Understanding How FP&A Users Actually Work

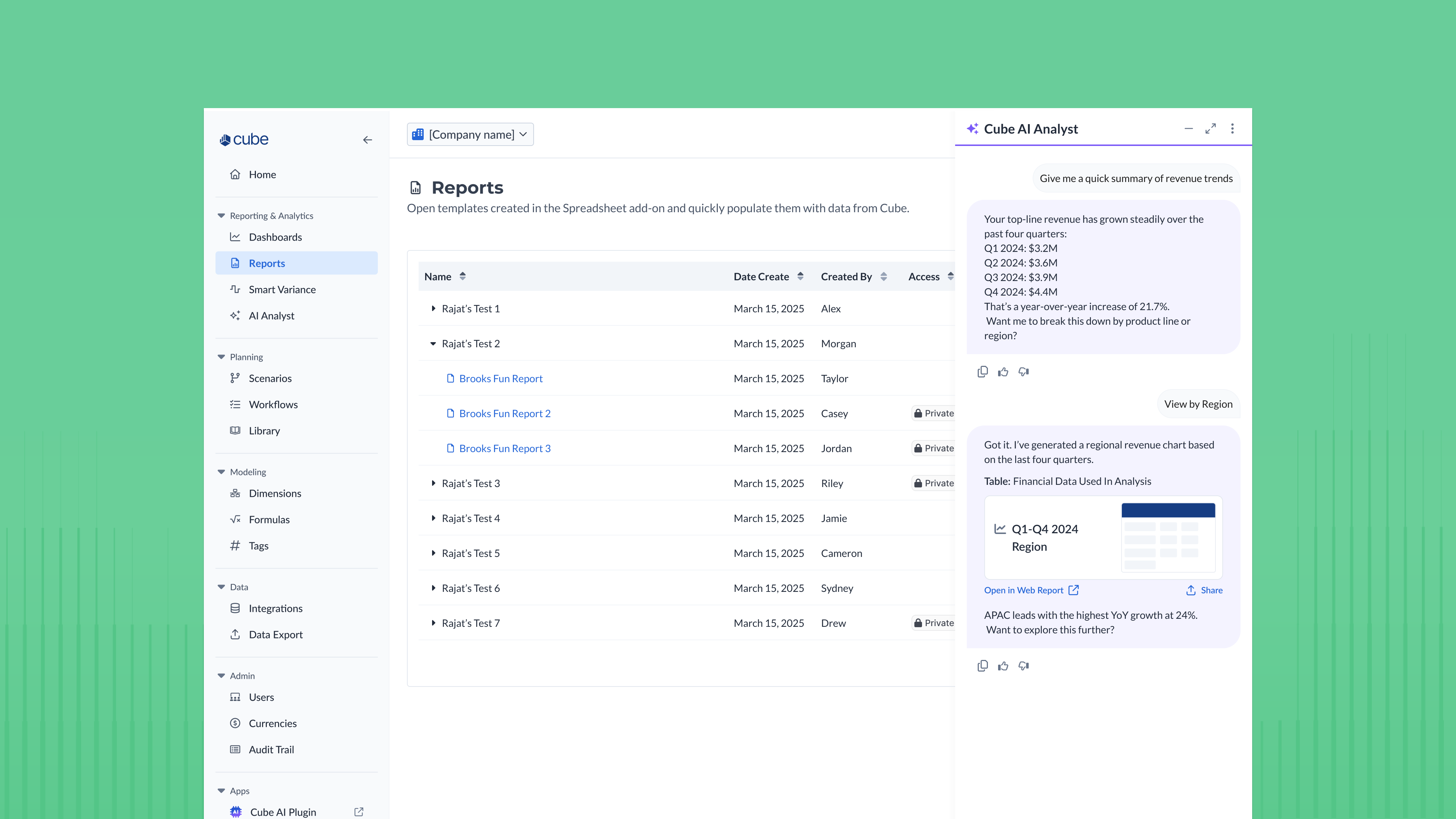

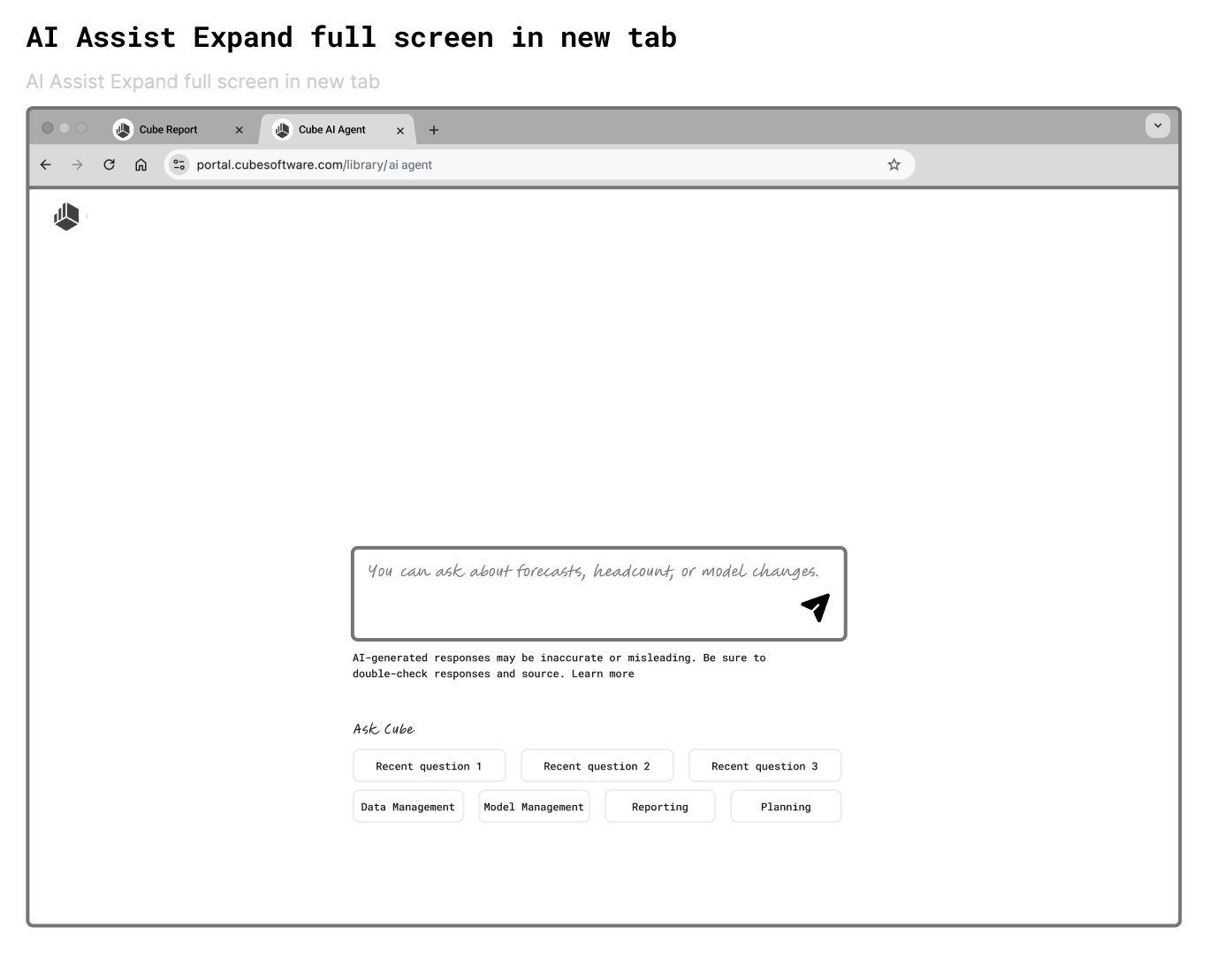

I partnered with a product expert to study real user workflows. The key insight was simple but shaped everything: our users multitask constantly — multiple tabs, multiple tools open at once. That meant AI output couldn't be blocking or demanding attention at the wrong moment. It needed to be available when users wanted it, invisible when they didn't. This led directly to two decisions: placing the AI trigger near natural points of intent, and adding an "Open in New Tab" option so users could engage with AI without losing their place.

Design Decisions I'm Most Proud Of

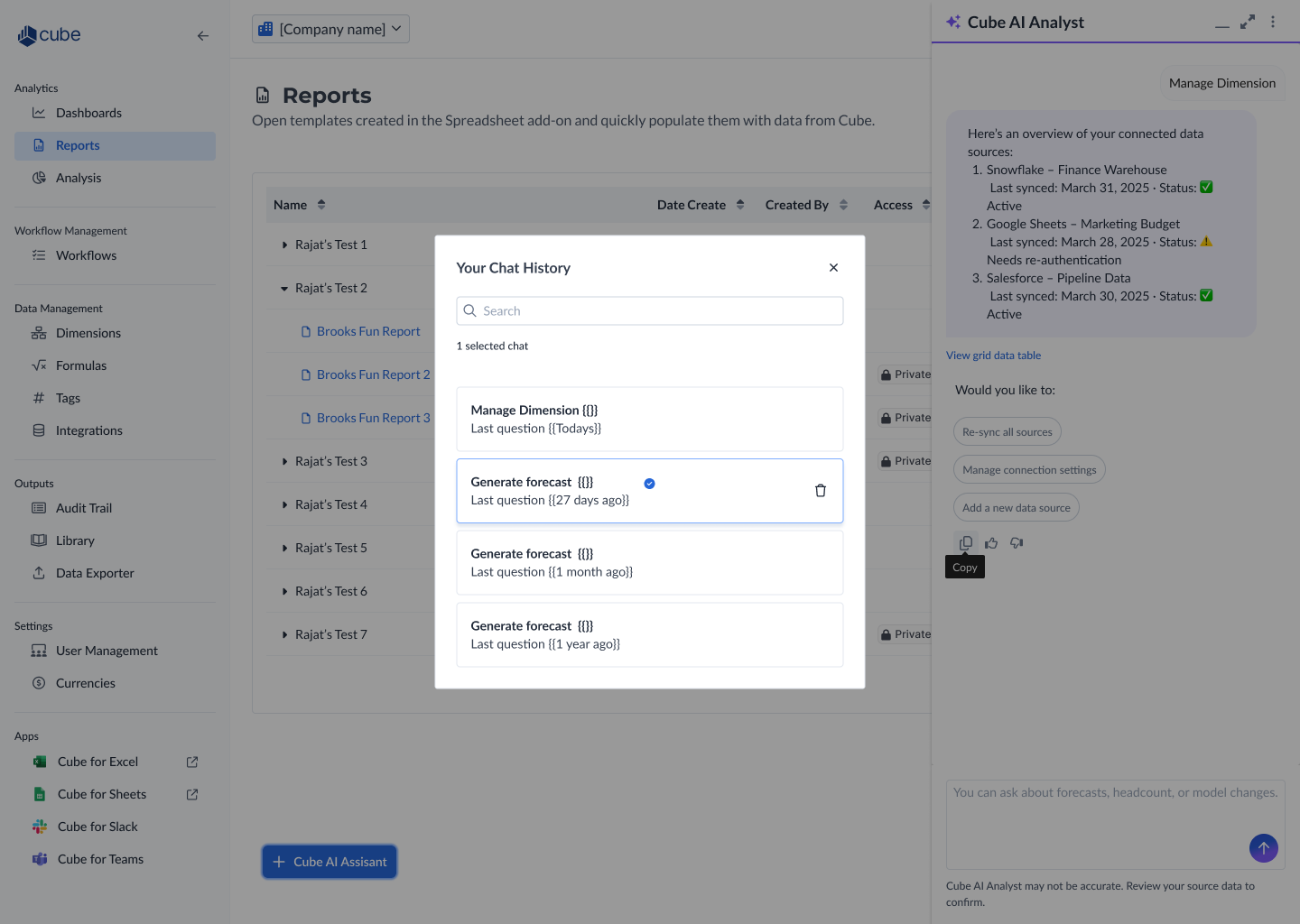

The progressive disclosure approach was the thing I kept coming back to throughout the project. The AI Agent starts as a lightweight panel — unobtrusive, easy to dismiss. From there, users can expand to full screen or open in a new tab depending on how deep they want to go. This wasn't just a UI pattern choice — it was a deliberate stance on how AI should behave in a workflow tool. It shouldn't demand your full attention. It should earn it.

The chat history scoping decision was a different kind of pride. For the first release, I intentionally kept it simple — a modal with search, showing recent sessions, easy to dismiss. I could have designed something more robust, but I made a conscious call to start lightweight and leave room to evolve. If usage grows, the system can expand to support tags, folders, and export. That kind of intentional restraint is harder to defend than building everything upfront, but it's the right call for a v1 with an uncertain adoption curve.

Consistency Across Channels

As the work expanded to Slack and the homepage, the core design principles carried over: persistent low-friction entry, trust signals (AI disclaimer, feedback thumbs), suggested follow-ups to reduce cognitive load, and non-blocking output. The Slack plugin adapted the pattern for a messaging context — lighter, faster, with a "Share to channel" action that made AI output collaborative rather than private. The goal was that a user moving between the portal and Slack would feel like they were talking to the same AI, not two different products.

Outcome

The AI Agent shipped across the portal and Slack. The two product launches below show the experience in the wild — including the AI-powered homepage and the updated portal UI. While we don't have formal usage metrics yet, qualitative feedback pointed to the trust signals and non-blocking interaction model as things that made it feel different from typical AI bolted onto a product. The progressive disclosure model also gave engineering a clear, phased implementation path, which kept scope manageable across multiple epics.

What's Next

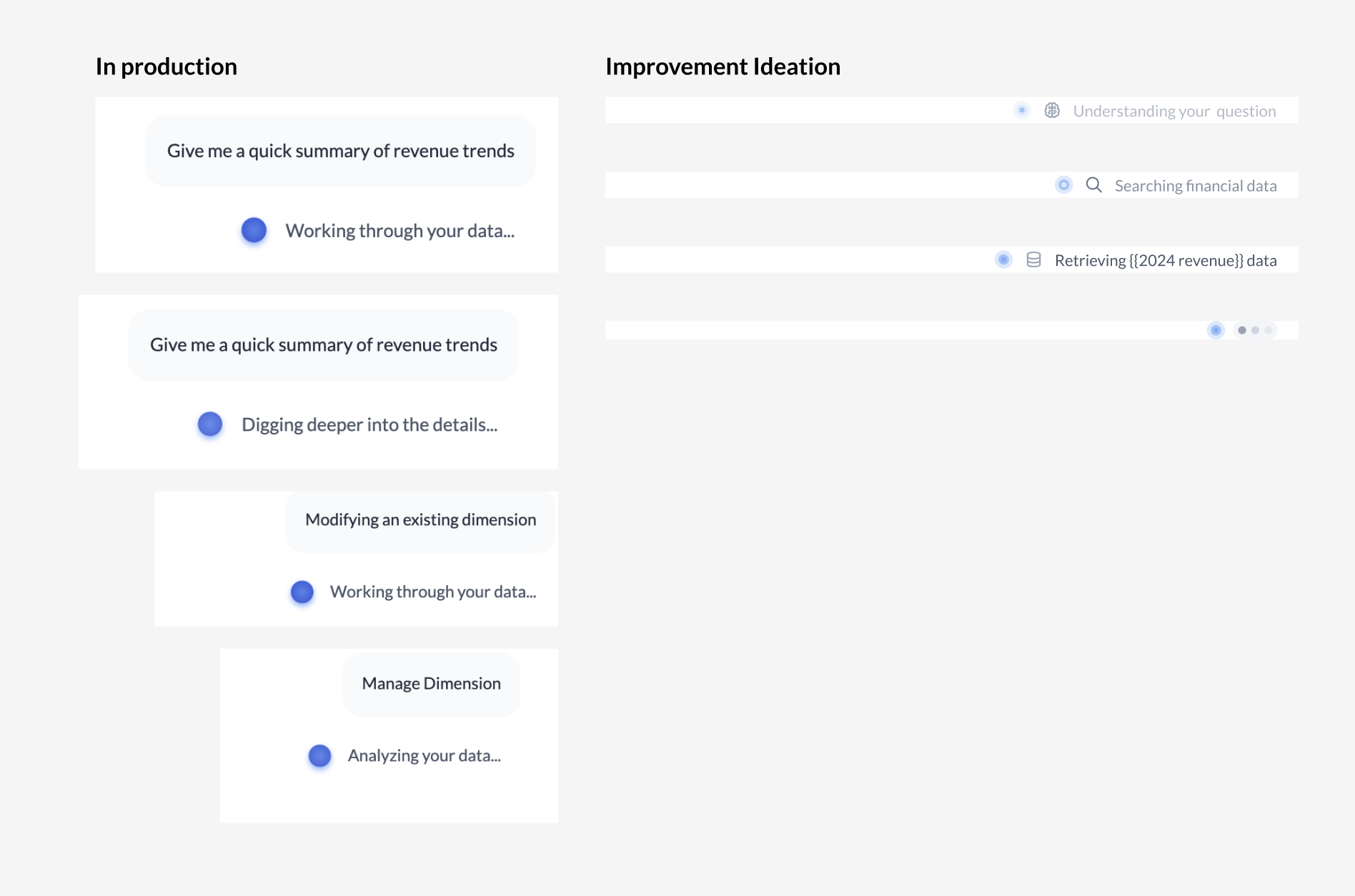

I'd push earlier for a shared AI interaction spec — a single document defining how AI behaves across all surfaces before individual epics kicked off. Alignment happened through iteration rather than upfront, and with a product expanding this fast, that foundation documented earlier would have reduced design drift between channels.I'm also continuing to explore how micro-interactions build trust in AI systems. Designing trustworthy AI isn't just about what the AI says — it's about how it communicates while it's working. Loading states, progress signals, and transparent reasoning cues all shape whether users trust the output before they even see it. This is the next layer I want to bring into the Cube AI experience.